Huggingface transformers on Macbook Pro M1 GPU

Introduction

When Apple has introduced ARM M1 series with unified GPU, I was very excited to use GPU for trying DL stuffs. I usually use Colab and Kaggle for my general training and exploration. Now this is right time to use M1 GPU as huggingface has also introduced mps device support (mac m1 mps integration). This enables users to leverage Apple M1 GPUs via mps device type in PyTorch for faster training and inference than CPU.

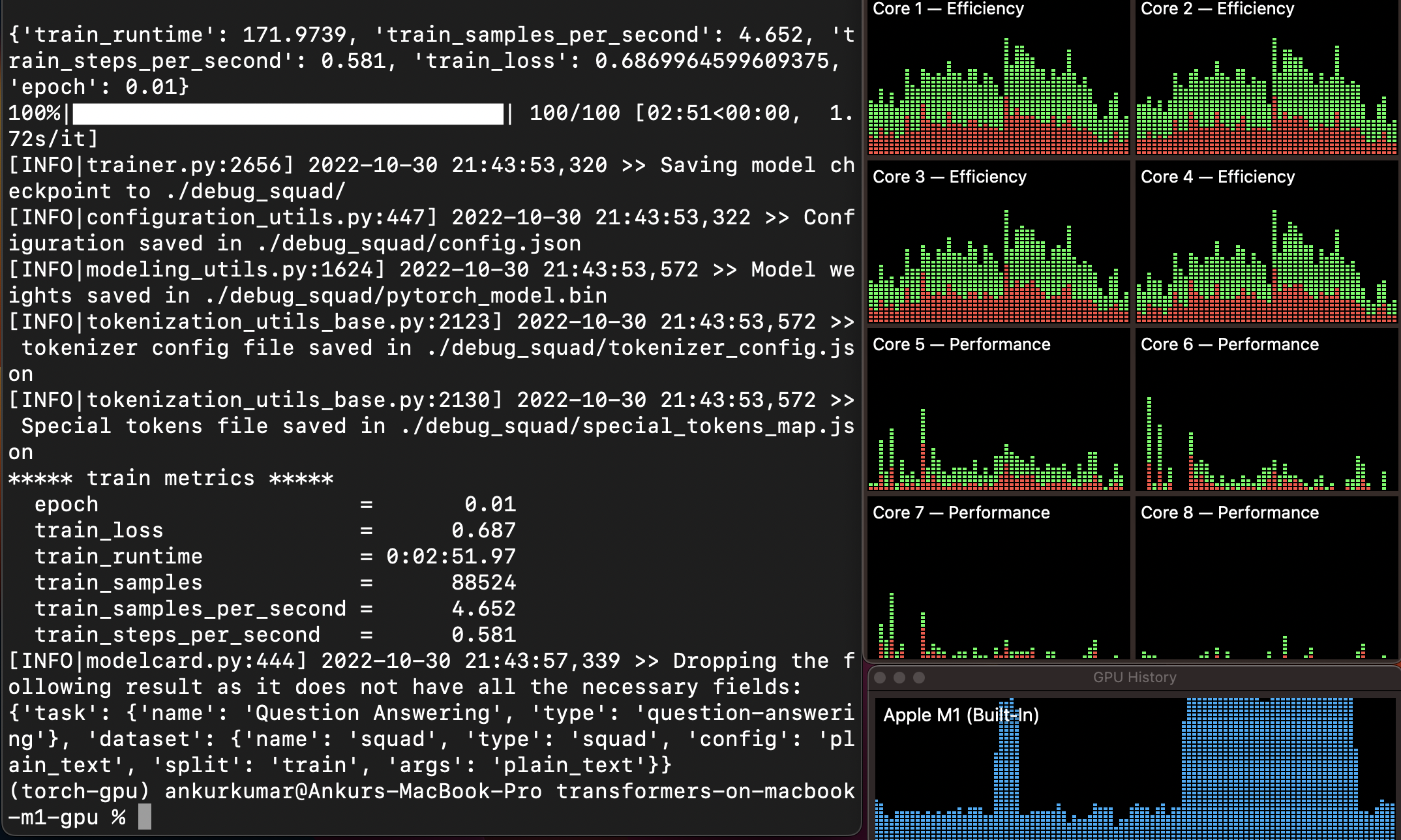

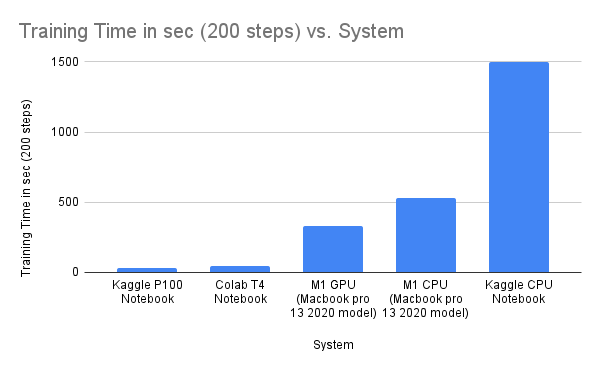

With M1 Macbook pro 2020 8-core GPU, I was able to get 1.5-2x improvement in the training time, compare to M1 CPU training on the same device.

Install Pytorch on Macbook M1 GPU

Step 1: Install Xcode

$ xcode-select --install

Step 2: Setup a new conda environment

$ conda create -n torch-gpu python=3.8

$ conda activate torch-gpu

Step 3: Install Pytorch

$ conda install pytorch torchvision torchaudio -c pytorch-nightly

# If not working with conda you may try pip

$ pip3 install --pre torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/nightly/cpu

Step 4: Sanity Check

import torch

import math

# this ensures that the current MacOS version is at least 12.3+

print(torch.backends.mps.is_available())

# this ensures that the current current PyTorch installation was built with MPS activated.

print(torch.backends.mps.is_built())

Hugging Face transformers Installation

Step 1: Install Rust

$ curl — proto ‘=https’ — tlsv1.2 -sSf https://sh.rustup.rs | sh

Step 2: Install transformers

$ pip install transformers

Lets try to train QA model

$ git clone https://github.com/Ankur3107/transformers-on-macbook-m1-gpu.git

$ cd transformers-on-macbook-m1-gpu

$ sh run.sh

Benchmark

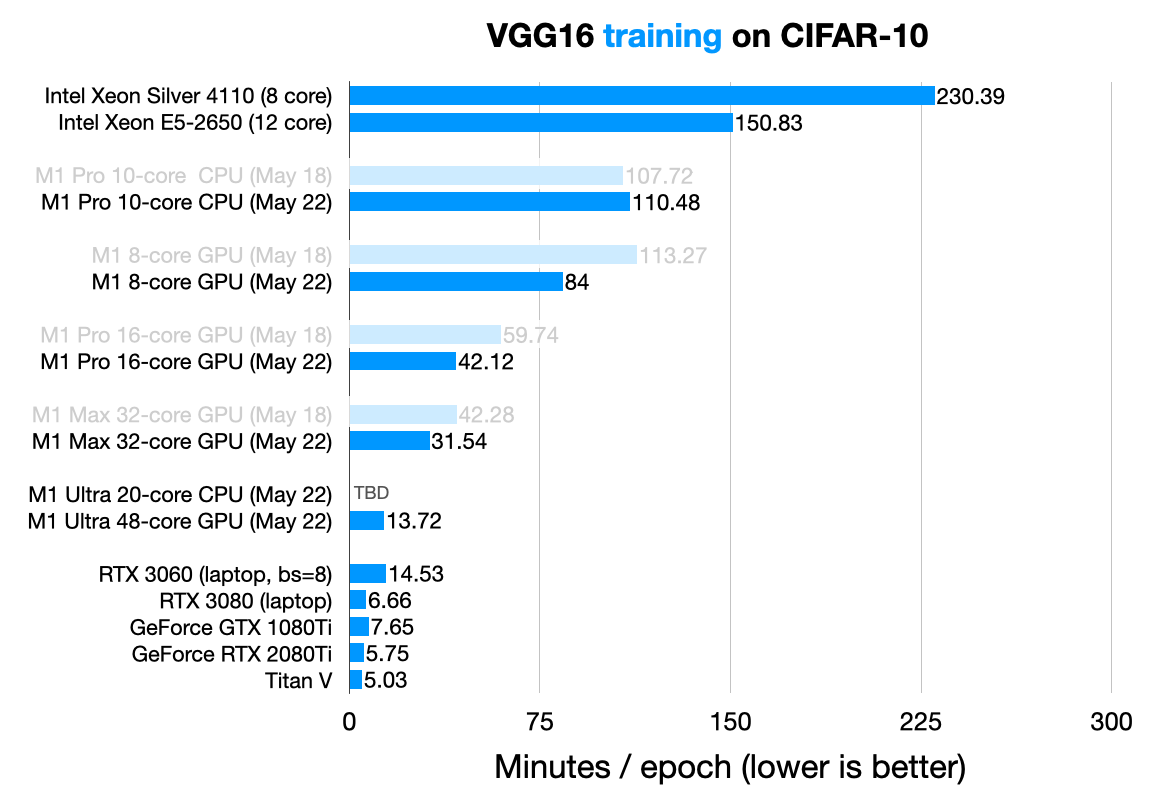

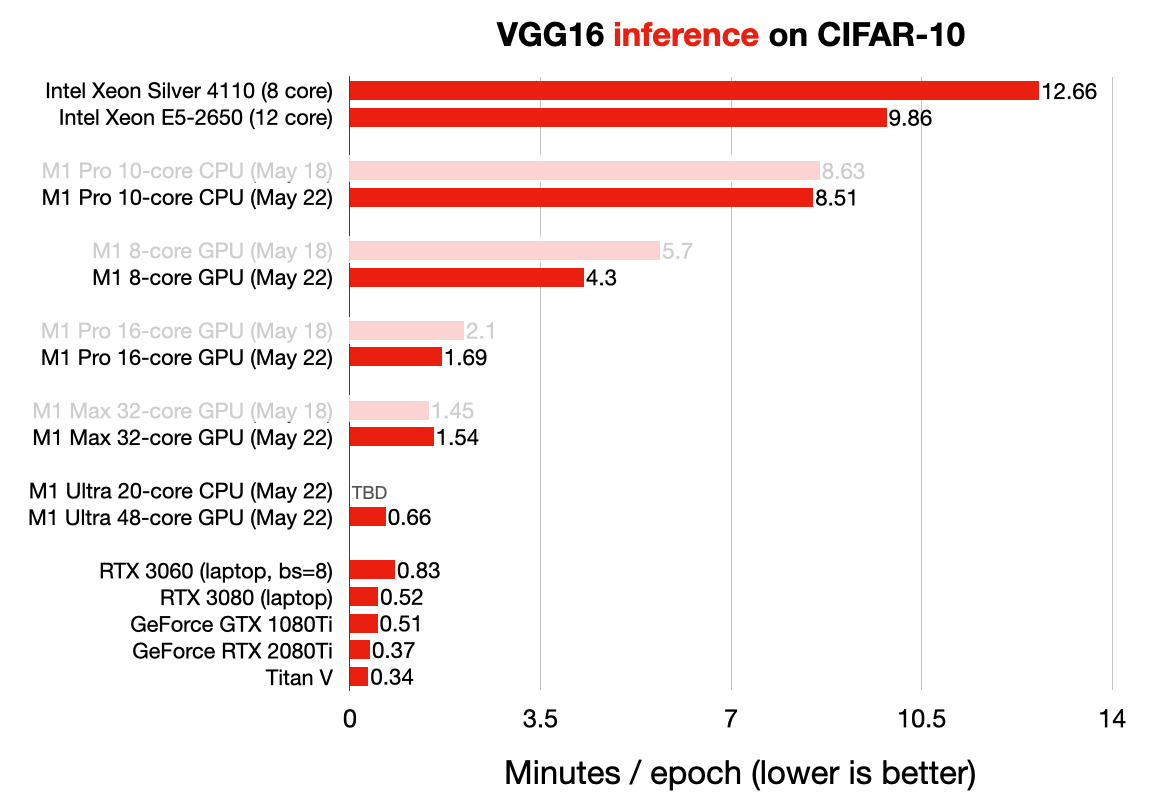

For more benchmarks:

sebastianraschka has written a beautiful blog and benchmarked different M1 based systems on training and inferencing VGG16. Please do check.

Reference

- https://github.com/Ankur3107/transformers-on-macbook-m1-gpu

- https://pytorch.org

- https://towardsdatascience.com/installing-pytorch-on-apple-m1-chip-with-gpu-acceleration-3351dc44d67c

- https://jamescalam.medium.com/hugging-face-and-sentence-transformers-on-m1-macs-4b12e40c21ce

- https://pytorch.org/blog/introducing-accelerated-pytorch-training-on-mac/

- https://sebastianraschka.com/blog/2022/pytorch-m1-gpu.html

Comments